Machine learning, an application of artificial intelligence, is quite capable. The software can learn unsupervised skills thanks to a machine learning algorithm.

From the mathematical modeling of neural networks, machine learning was first conceived. Attempts to mathematically map out human cognition’s thought and decision-making processes were made in a 1943 paper by neuroscientist Warren McCulloch and logician Walter Pitts.

In this article, I take you on a brief journey through history to look at the beginnings of machine learning and some of its most recent achievements. Let’s start.

Table of Contents

Brief History Of Machine Learning

1950 — The “Turing Test” is developed by Alan Turing to determine whether a computer possesses true intelligence. A computer must be able to convince a person that it is also a person in order to pass the test.

1952 — The original computer learning application was created by Arthur Samuel. The program was the game of checkers, and the IBM computer improved at the game the more it played, studying which moves made up winning strategies and incorporating those moves into its program.

1957 — The perceptron, which was created by Frank Rosenblatt, is the first neural network for computers and simulates how the human brain thinks.

1967 — With the creation of the “nearest neighbor” algorithm, computers could start making use of very fundamental pattern recognition. This could be used to plan a route for salespeople who are on the road, starting in a random city and making sure they stop in each city during a brief tour.

1979 — The “Stanford Cart,” created by Stanford University students, can autonomously move around obstacles in space.

1981 — Explanation-based learning (EBL) is a concept that Gerald Dejong introduces. In EBL, a computer analyzes training data and develops a general rule it can adhere to by removing irrelevant data.

1985 — NetTalk, developed by Terry Sejnowski, mimics how a baby learns to pronounce words.

The 1990s — The approach to machine learning is changing from one that is knowledge-driven to one that is data-driven. Scientists start developing computer programs that can analyze vast amounts of data and draw conclusions—or “learn”—from the outcomes.

1997 — At chess, IBM’s Deep Blue defeats the world champion.

2006 — Geoffrey Hinton introduces the term “deep learning” to describe new algorithms that enable computers to “see” and recognize objects and text in images and videos.

2010 — The Microsoft Kinect allows users to interact with computers through movements and gestures by tracking 20 human features at a rate of 30 times per second.

2011 — Watson from IBM outperforms human opponents on Jeopardy.

2011 — Google Deep neural networks in brains that have undergone development can learn to discover and classify objects in a manner similar to that of cats.

2012 – In order to find YouTube videos that feature cats, Google’s X Lab creates a machine learning algorithm that is capable of browsing the site’s video library on its own.

2014 – Facebook develops Using the same level of accuracy as humans, DeepFace is a computer algorithm that can identify or confirm people in photos.

2015 – Amazon releases a platform for machine learning.

2015 – The Distributed Machine Learning Toolkit, developed by Microsoft, enables the effective distribution of machine learning issues across numerous computers.

2015 – A public letter warning of the danger of autonomous weapons that choose and engage targets without human intervention is signed by more than 3,000 AI and robotics researchers, including Stephen Hawking, Elon Musk, and Steve Wozniak (among many others).

2016 – In the Chinese board game Go, which is regarded as the world’s most complex board game and is considerably more difficult than chess, Google’s artificial intelligence algorithm defeats a professional player. In the Go competition, the Google DeepMind-developed AlphaGo algorithm was successful in winning five of the five games.

Current Situation Of Machine Learning

Currently, some of the most important technological advances are made possible by machine learning. It is employed in both the emerging field of autonomous vehicles and galactic exploration because it aids in the discovery of exoplanets. Stanford University recently defined machine learning as “the science of getting computers to act without being explicitly programmed.” The development of supervised and unsupervised learning, new robot algorithms, the Internet of Things, analytics tools, chatbots, and other technologies have all been sparked by machine learning. Here are seven typical applications of machine learning in business today:

- Analyzing Sales Data: Streamlining the data

- Mobile Real-Time Personalization Promoting the experience

- Fraud Detection: Detecting pattern changes

- Recommendations for Products: Customer personalization

- Systems for managing learning: Decision-making programs

- Dynamic Pricing: Flexible pricing based on a need or demand

- Natural Language Processing Speaking with humans

The more time a machine learning model is used, the more adaptive it becomes and the more accurate it becomes. Scalability and efficiency are enhanced when ML algorithms are combined with new computing technologies. Machine learning can solve a range of organizational complexities when used in conjunction with business analytics. Predictions can be made using contemporary ML models for everything from disease outbreaks to the rise and fall of stocks.

Utilizing a method known as instruction fine-tuning, Google is currently testing machine learning. The objective is to train an ML model to address problems with generalized natural language processing. Instead of teaching the model to solve just one type of problem, the process trains it to solve a wide variety of problems.

Read More: Future Of Machine Learning

Machine Learning And AI Go Different Roads

Artificial intelligence research had been concentrated on using logical, knowledge-based methods rather than algorithms in the late 1970s and early 1980s. Researchers in computer science and artificial intelligence also stopped working on neural networks. Artificial intelligence and machine learning became divided as a result. Up until that point, machine learning had served as an AI training program.

After being split off into its own field, the machine learning industry, which employed many researchers and technicians, struggled for almost ten years. The industry’s focus has shifted from developing artificial intelligence to finding solutions to problems related to service provision. Its emphasis shifted from techniques and strategies derived from AI research to those found in probability theory and statistics. The ML industry continued to emphasize neural networks during this time, and the 1990s saw a boom. The majority of this success was a result of the expansion of the Internet, which profited from the expanding availability of digital data and the ability to share its services via the Internet.

Conclusion

Leveraging artificial intelligence (AI)-related technologies require the use of machine learning (ML), a crucial tool.

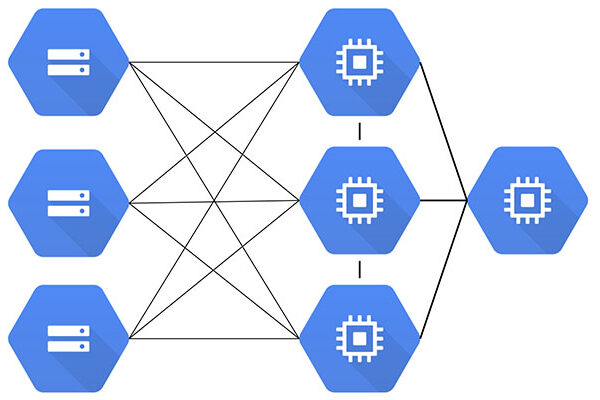

For many organizations today, it is an essential component of contemporary business and research. It helps computer systems perform better over time by using algorithms and neural network models.

Without being specifically programmed to make those decisions, machine learning algorithms automatically create a mathematical model using sample data, also referred to as “training data.”